I think this is an interesting concept, somehow related to other kinds of debts we encounter. In 100⁝ Software Engineering it would be similar to 100d⁝ Technical Debt. The idea is that we can move faster by offloading work to AI, but later we pay the price with a lower understanding of the output and a weaker ability to reason about it. Which is kind of obvious, but might have a global consequences on unprecedented scale.

I also understand that similar problems were probably questioned since we discovered writing, and to some extent those worries were true. The invention of writing, reduced our ability to memorize. Then the arrival of radio, TV, the internet, and mobile phones also, in different ways, pushed our cognitive abilities toward laziness at least a little.

Certainly there is no free lunch. Using AI, on the one hand, helps us deliver work faster, effortlessly. On the other hand, it decreases creativity, critical thinking, and our understanding of the output from “our” work.I have seen this happen in my own experience.. When I do not dive into details / and relay only on AI, only the very weak understanding arises. Without real understanding, all the knowledge has a weaker attachment to the root of my knowledge. The leaves of knowledge just fall off quicker, let’s say. It is also much more difficult to look at the problem from the other side, to rotate the problem in memory and look at it from different angles. Applying knowledge in different contexts is also more difficult.

So right now I consider AI good for the organization of information, but I am starting to be very cautious when it comes to creation. However, in a world where everybody is using it, without it there is a risk of being left behind.

I can also treat it as a force multiplier for questions. Then another dimension is added to the model again. However, if I am going to treat it as a source of answers, it is going to flatten my understanding model.

Not all of us can be the next Einstein, etc. But I believe we should always be the best version of ourselves, and certainly AI used incorrectly does not help with that pursuit. I think that is related to entropy. You have to put in work to create something and to keep it in order. We should not outsource our thinking. But maybe it will be like this: we have a problem with obesity, but at least some people go to the gym, they put in the work. So here we might see the same pattern later on.

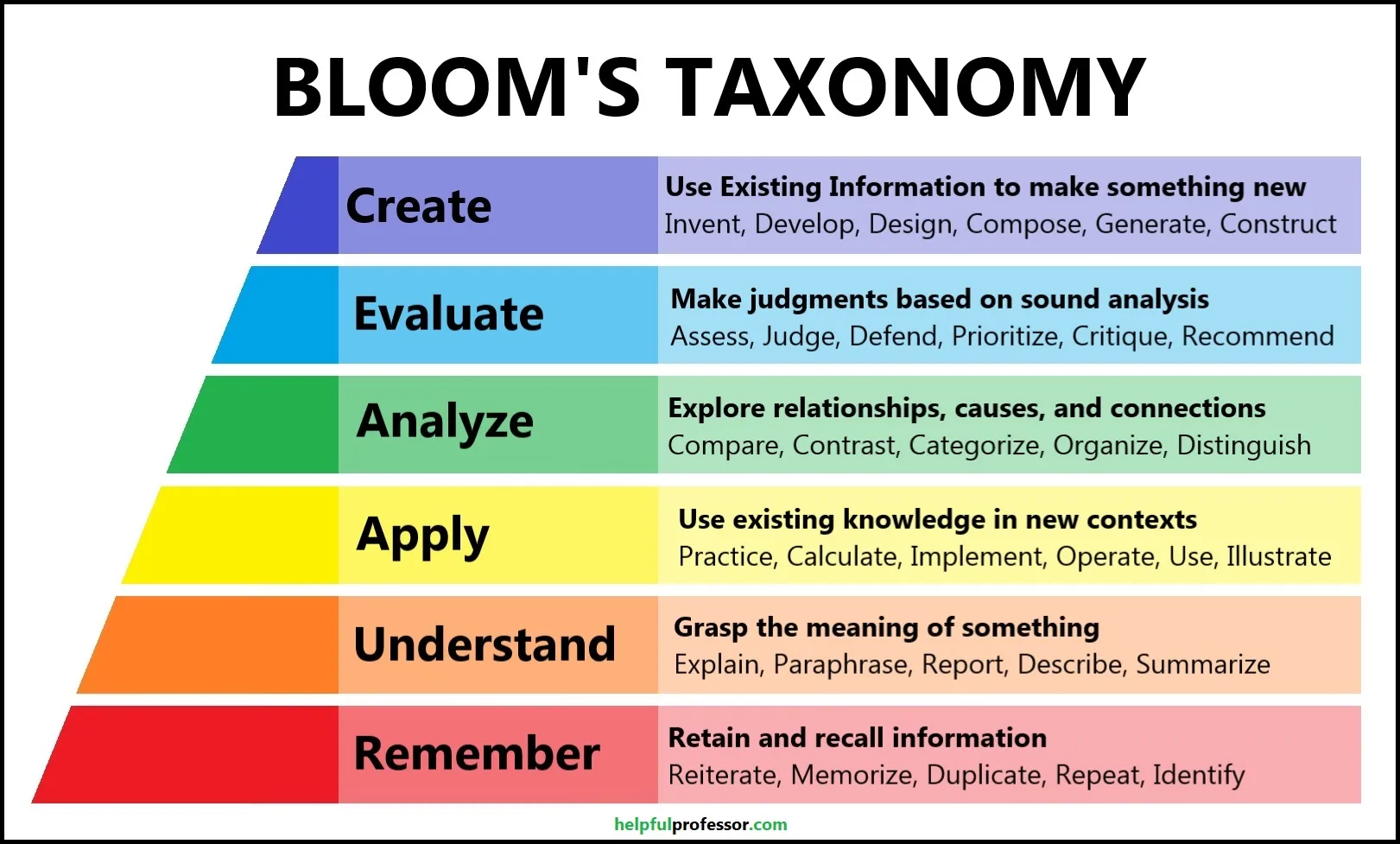

There is a thing called Bloom’s taxonomy. ( look at the picture below )

Doing things with AI is like operating on the lowest level of that Bloom’s taxonomy. When I need to analyze, evaluate, or create, I build a completely different mental model. Let’s say 3D. However, work with AI creates much weaker connectivity in our brain, so it is like having a 2D model.

It is also ok. Let’s do this again, one more time.

It is also related to 20251112113806⁝ Naur Theory (Programming as Theory Building) because it is basically the same problem. If you do not build something, you cannot reason later about it.

Also The AI output has this 42i1⁝ uncanny valley feeling, that something is odd, but difficult to express what is exactly wrong.

sources

N. Kosmyna et al., “Your Brain on ChatGPT: Accumulation of Cognitive Debt when Using an AI Assistant for Essay Writing Task,” arXiv.org, Jun. 10, 2025. https://arxiv.org/abs/2506.08872