Coding sessions Learning

02/2026 (Codex 5.3) Kubernetes Cluster

For me, Codex 5.3 was very good in one specific area: it helped me build a full Kubernetes cluster on OVH.

What I liked most was that it did not only generate random commands or YAML files.

It helped with the full process:

- setting up the cluster

- preparing and fixing templates

- downloading and adjusting Helm charts

- making corrections after errors

- improving deployment configs

- handling scaling topics

- solving problems during the work

It was able to react on the fly when something failed. That was important. It felt less like “code generation” and more like real support during implementation.

Fast progress, step by step

In this workflow, I could move quickly:

- create the cluster

- add components

- test things

- fix issues

- continue

I used a lot of context/tokens, but it was not wasted. The tokens were used because I (We:)) was actually delivering features and infrastructure changes, not repeating the same mistakes.

That made a big difference for me.

I also learned a lot during the session

Another important thing: I learned many practical tricks while working.

This is one of the best parts of using AI in this way. It is not only “do it for me.” It can also:

- show a practical solution

- suggest another option

- explain what is wrong

- help you understand the process

After the session, I could ask it to create a md file with a summary of the work.

That was very useful. Later I could read it and understand:

- what was changed

- what problems happened and why

- how those problems were solved

- why some configuration choices were made

So the session was not only about getting a result. It also created documentation I could study later.

My perspective:

I want to be clear. I was never a Kubernetes expert.

That is exactly why this experience was important for me.

The ability to build my own cluster in just a few hours (1-2hours) was something I did not expect. And it was not only fast - it also helped me learn while doing the work.

Final thoughts

In this OVH + Kubernetes scenario, Codex 5.3 worked very well for me.

It helped me:

- build faster

- fix issues during the process

- adjust templates and Helm configs

- keep moving without getting stuck

- create documentation after the session

For someone who is not a Kubernetes expert but wants to build something real and learn at the same time, this was a very useful way to work.

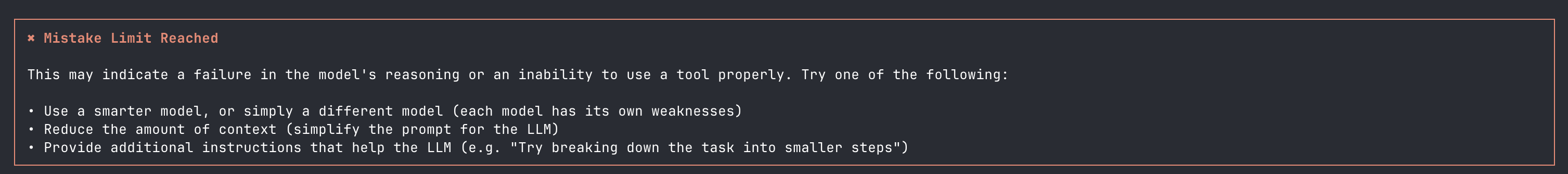

02/2026 (Kimi K2.5)

After I spend all my limit with Codex and Claude models. I gave a try to Kimi K2.5 with Kilo code.

But it seems that even some models on paper are close together there is something off with many of them.

Some models are smart in “benchmarks” but sloppy on different levels ( and sometimes is related to specific orchestrator / client )

- wrong command format

- wrong file path assumptions

- forget to inspect before editing

- too many tool calls without updating plan

- Weak Long-context stability

- Cannot deal with end of context correctly (compaction and creating a new one from existing)

- keep pushing same wrong approach

- bad repo navigation

- bad shell tools usage

- bad test-driven iteration

So eventually I got from Kilo while using MoonshotAI: Kimi K2.5

In comparison, using Codex 5.3 was a great experience. I was burning through the 250k-token context window very quickly, but it was always because it was delivering features quickly, exactly as I wanted, and the context compaction worked really well.

12/2025 (Antigravity with Gemini 3 PRO / high)

I worked with Gemini 3 and Antigravity.

Antigravity felt like a good piece of software. It showed me more clearly what modern AI-supported development can look like. It was one of those moments when you start to see a new way of working, not only a new tool.

What worked well

For me, Antigravity was very strong in:

- planning features / modification of plan / handling my input / way how I could participate / comments existing plans

- organizing work into steps

- suggesting a good implementation path

It was also quite good at execution. It could help move the work forward and handle many tasks in a practical way.

Because of that, the overall experience was good, especially in the earlier and middle parts of a session.

Where it struggled

The main problem was very long sessions.

When the context was getting close to the limit, the model sometimes started to behave in a strange way. Near the end, it could become unstable and produce random outputs that did not match the task anymore (for example simple text like “hello”, “hi”, etc.).

So even though it was good at planning and decent at execution, it was less reliable in long runs with heavy context.

Even with that limitation, this was still an important step.

It helped me understand how AI can support development in a more structured way:

- first planning

- then execution

- then iteration.

And Antigravity, as a software experience, really helped me see how useful this style of development can be when the workflow is designed well.

Final thoughts

My summary from that time (December 2025) is simple:

- Gemini 3 PRO / Antigravity was very good for feature planning

- it was solid in execution

- but it had problems in very long sessions near the context limit